1. Introduction: The Vision of Frictionless Shopping

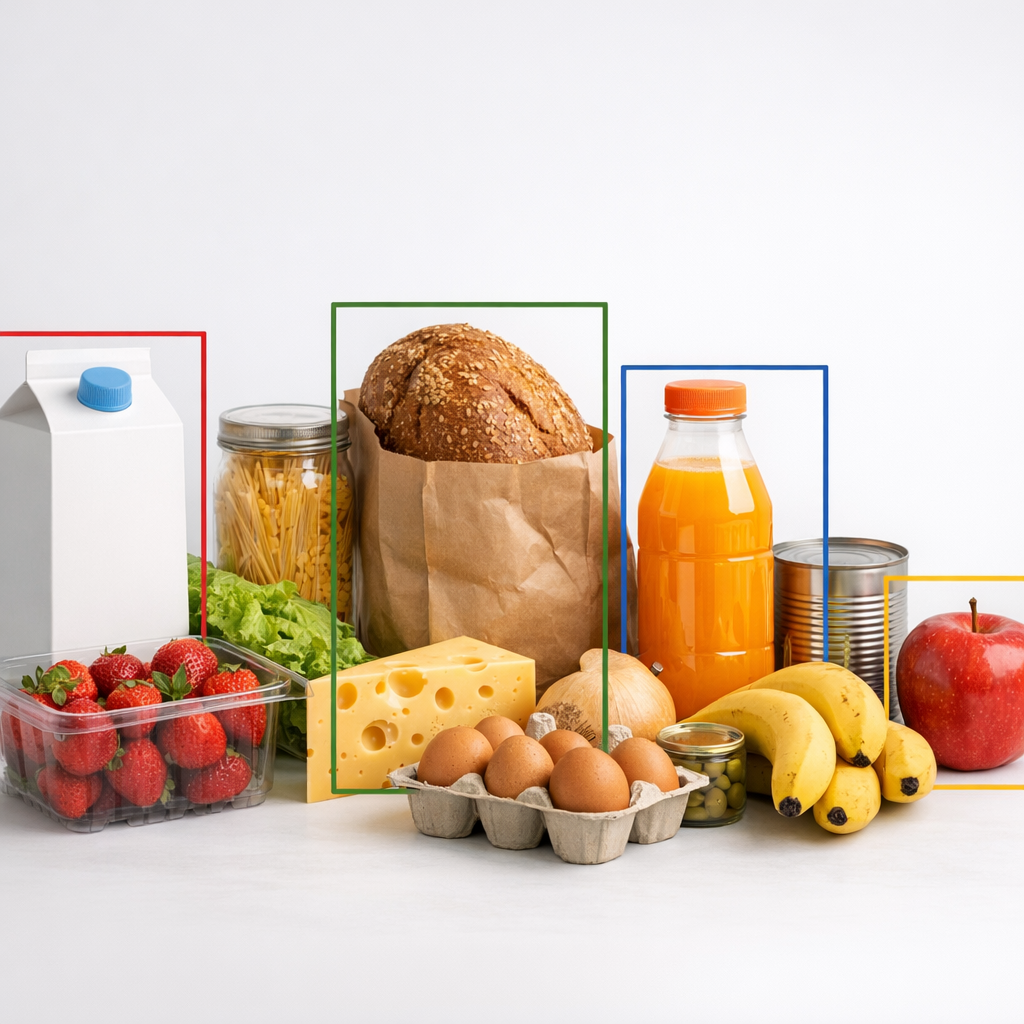

For decades, the grocery experience has been tethered to the manual synchronization of eye and hand: a clerk or shopper identifies an item, hunts for a barcode, and ensures the scanner registers the price. This process is inherently friction-heavy and vulnerable to human error. In the world of computer vision (CV), however, the challenge shifts from finding a sticker to interpreting a scene. Identifying a crumpled bag of chips under harsh fluorescent lighting or a half-hidden carton of milk—the classic problems of lighting conditions and occlusion—requires more than a simple sensor; it requires intelligence. The “streamlit-object-detection” project serves as a high-impact proof-of-concept for this transformation, utilizing the YOLO (You Only Look Once) model to shift the burden of identification from the human to the algorithm.

2. Moving Beyond Static Snapshots to Real-Time Video Interaction

The architecture of the repository betrays its ambition; the presence of WebRTC integration in the commit logs and a dedicated /pages directory for video detection signals a strategic move toward real-time utility. In a retail environment, a static photo is rarely sufficient; items are in constant motion, transitioning from a cart to a conveyor or into a bag.

By moving beyond still images, the application addresses the fluid nature of commerce. This transition transforms the tool from a mere classifier into a dynamic monitoring system capable of operating at the speed of a modern checkout line. This focus is the core mission of the repository:

“Grocery items object detection using Yolo model.”

By implementing live stream support, the project demonstrates how CV can move from a post-facto analysis tool to an active participant in the retail workflow.

3. Hardware-Agnostic Performance: The Strategic Shift to ONNX

Within the /models folder lies a critical file: best.onnx. From a computer vision specialist’s perspective, the choice of the Open Neural Network Exchange (ONNX) format is a masterstroke for web deployment. While raw training checkpoints are necessary for model development, they are often bulky and tied to specific frameworks like PyTorch or TensorFlow.

By exporting to ONNX, the developer enables the use of the ONNX Runtime, an inference engine designed for hardware acceleration. This ensures that the grocery detection model remains portable and performs with minimal latency across various devices, regardless of whether the user is on a high-end workstation or a mobile browser. It effectively bridges the gap between a computationally intensive machine learning environment and the lightweight requirements of a real-time web application.

4. Democratizing AI Through the Streamlit Framework

This project is a prime example of how modern frameworks have democratized sophisticated AI. Built with 100% Python, the application is hosted at a public URL (https://groceryobjdetection.streamlit.app/), making high-tier object detection accessible to anyone with an internet connection.

The strategy here is clear: by abstracting away the complexities of web infrastructure, a developer can focus entirely on the model’s utility. What once required a dedicated frontend team and complex backend pipelines is now consolidated into a single, cohesive repository. This lowers the barrier to entry for retail tech, allowing a local YOLO implementation to become a globally accessible tool for inventory management and customer interaction.

5. A Modular Architecture for Future Retail Tech

The organizational structure of the project—centered around Home.py, a multi-page configuration, and a dedicated yolo_predictions.py script—reflects a professional approach to ML engineering. Specifically, the use of yolo_predictions.py to house the detection logic is a hallmark of professional software design: decoupling the logic from the user interface.

This modularity is essential for scaling a simple detection tool into a robust retail ecosystem. By separating the “brain” (the YOLO prediction logic) from the “face” (the Streamlit UI), the system becomes highly maintainable. A developer could swap the underlying model for a more advanced version or integrate the detection logic into an automated stock-counting backend without ever needing to rewrite the interface code.

6. Conclusion: The Future of Intelligent Inventory

The “streamlit-object-detection” project provides a foundational blueprint for a future where grocery stores operate with the intelligence of a data center. As these visual models evolve, we are moving toward a world of “invisible checkouts” and self-correcting carts that update your total as you shop. The line between the physical object on the shelf and its digital record is blurring, paving the way for inventory systems that manage themselves in real-time.

How will our daily interactions with the physical world change when our devices understand what we are holding—and what we owe—as clearly as we do?