Machine Learning

-

PSE Stock Dashboard

After months of dedicated work and countless hours invested, this web-based Streamlit application is a PSE stock dashboard designed to predict the next day’s price movement. At its core, the app leverages a tree-based machine learning model trained on an extensive set of features, including opening, high, low, and closing prices, trading volume, sentiment scores (positive, negative,…

-

Beyond the Recording: 4 Surprising Truths About Building Your Own AI Notetaker

SuperDataScience GitHub Repository My Huggingface Space AI Notetaker We’ve all been there: staring at a digital recording of a critical board meeting or a complex lecture, knowing that the “information debt” we just accrued will likely never be paid. We hit record with the best intentions, but the reality of transcribing and distilling those minutes…

-

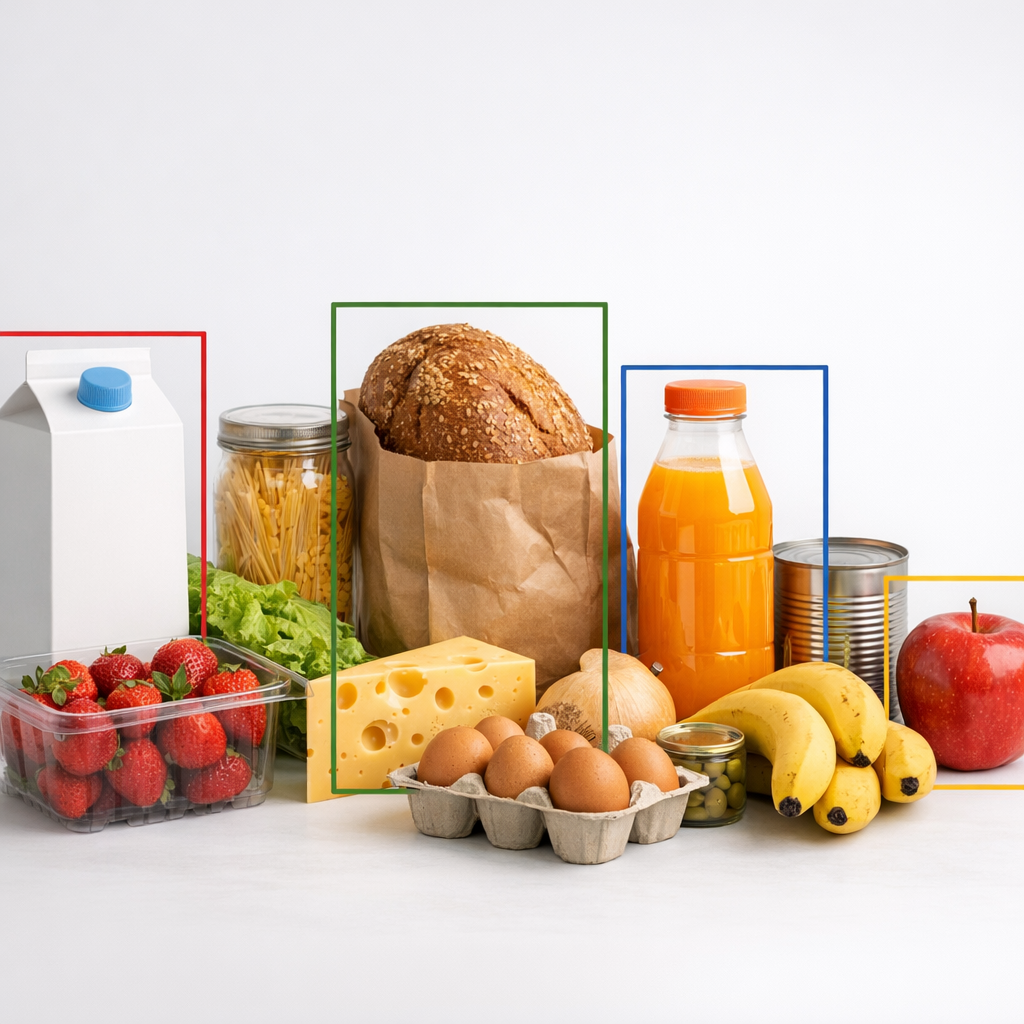

From Checkout Lines to Code Lines: How a Simple Web App is Reimagining the Grocery Experience

My Streamlit App My GitHub Repository 1. Introduction: The Vision of Frictionless Shopping For decades, the grocery experience has been tethered to the manual synchronization of eye and hand: a clerk or shopper identifies an item, hunts for a barcode, and ensures the scanner registers the price. This process is inherently friction-heavy and vulnerable to…

-

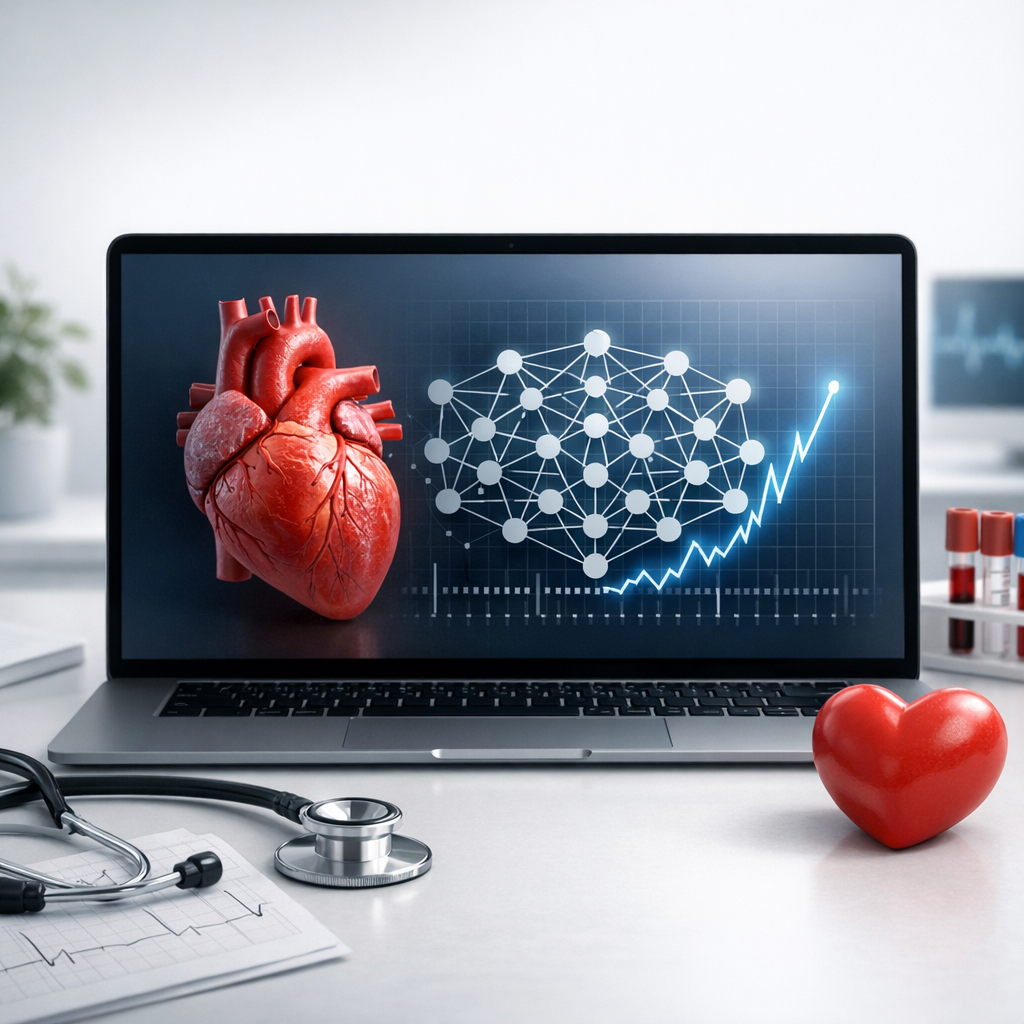

Predicting Heart Attack Risk

This study compares the performance of three models—Logistic Regression, Naïve Bayes, and Artificial Neural Network—on predicting heart attack risk. The Artificial Neural Network outperformed in accuracy, precision, recall, and F1-Score, making it the best model. Logistic Regression was strong in interpretability and simplicity.

-

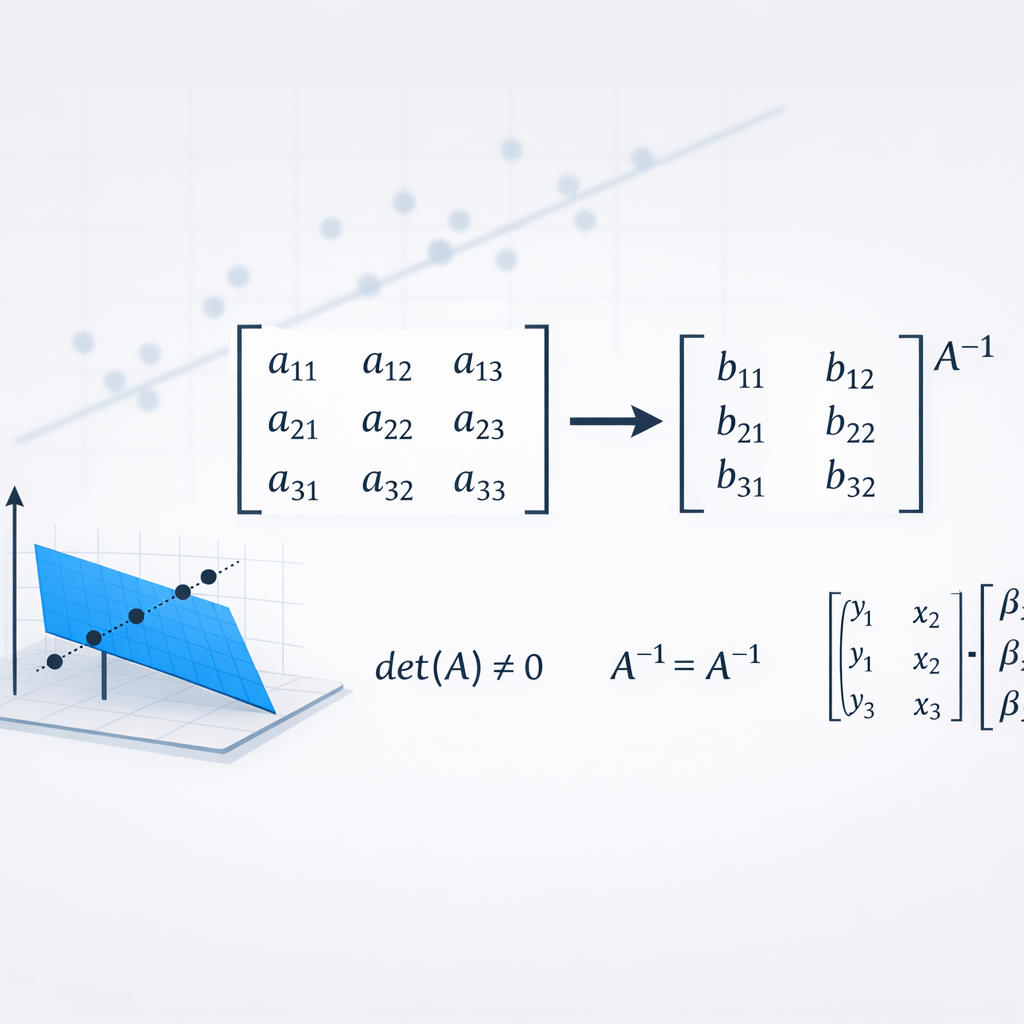

Matrix Inversion in Linear Regression

Linear regression models relationships between variables by finding a best-fitting line. Using matrix inversion and the Ordinary Least Squares (OLS) method, it efficiently computes coefficients, simplifying the process and providing a clear mathematical framework. This guide covers fundamental concepts and practical applications of matrix algebra for solving linear regression.